Create engaging training videos in 160+ languages.

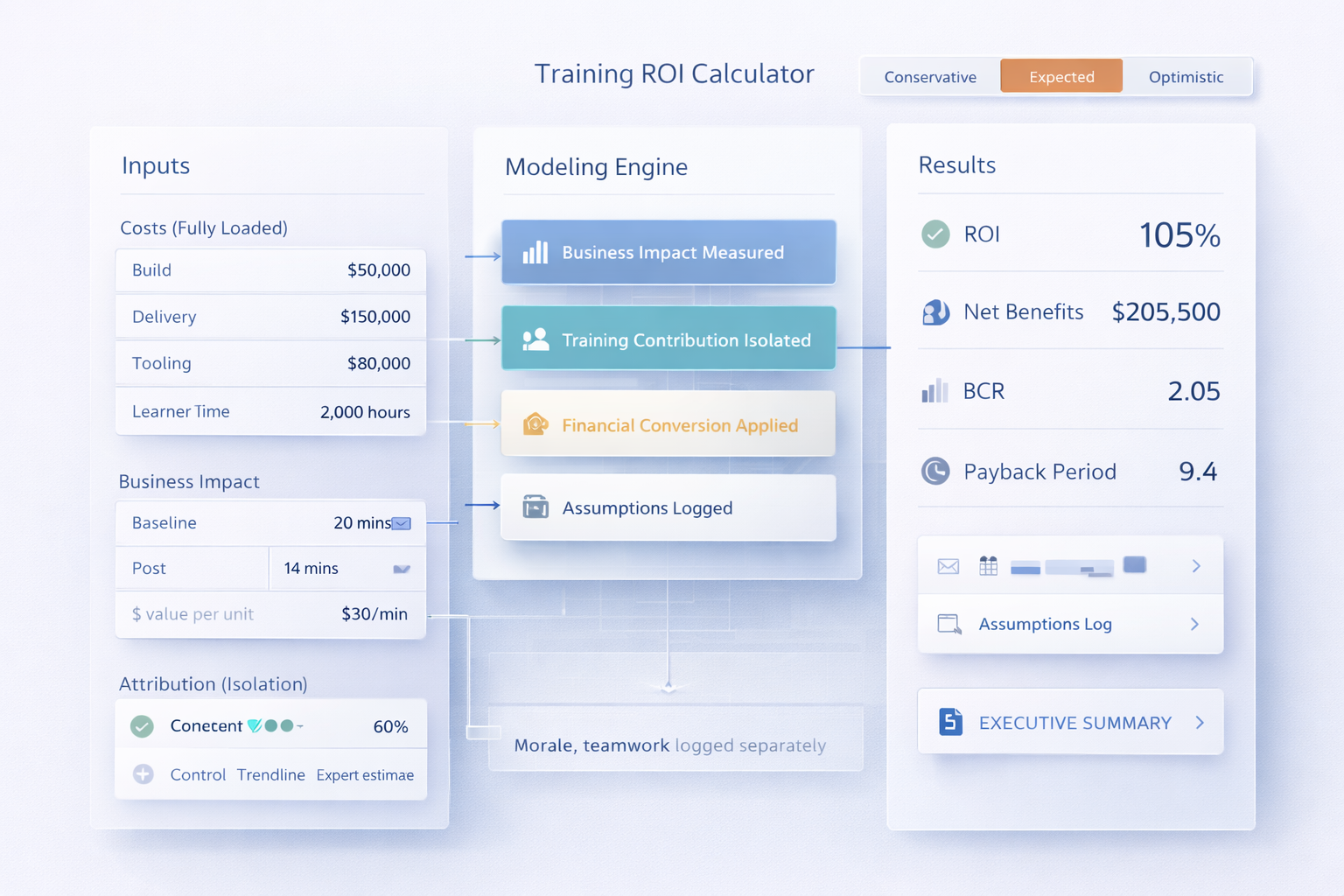

L&D has a measurement problem. It's hard to prove the connection between investments in learning and business outcomes (or at least hard to make that case neatly to your finance team).

In a 2025 survey, nearly 1 in 3 leaders admitted they couldn't connect their L&D investments to their company's revenue or profit margin.

That's a problem, and AI is only exacerbating it.

Take manager training as an example. I've run manager training programs, and I'm sure you have. Years ago, it was easy to get leadership buy-in for manager development. Not only was everyone doing it, but there was an entire body of research demonstrating that managers drive performance and retention.

Today, it's much harder to make the case to invest in middle management, especially when you have companies trying to flatten their hierarchies, or replacing managers entirely with AI.

AI has raised the stakes. But the good news is, it can finally help us close the measurement gap and make a more compelling business case for L&D.

How is AI used in training and development?

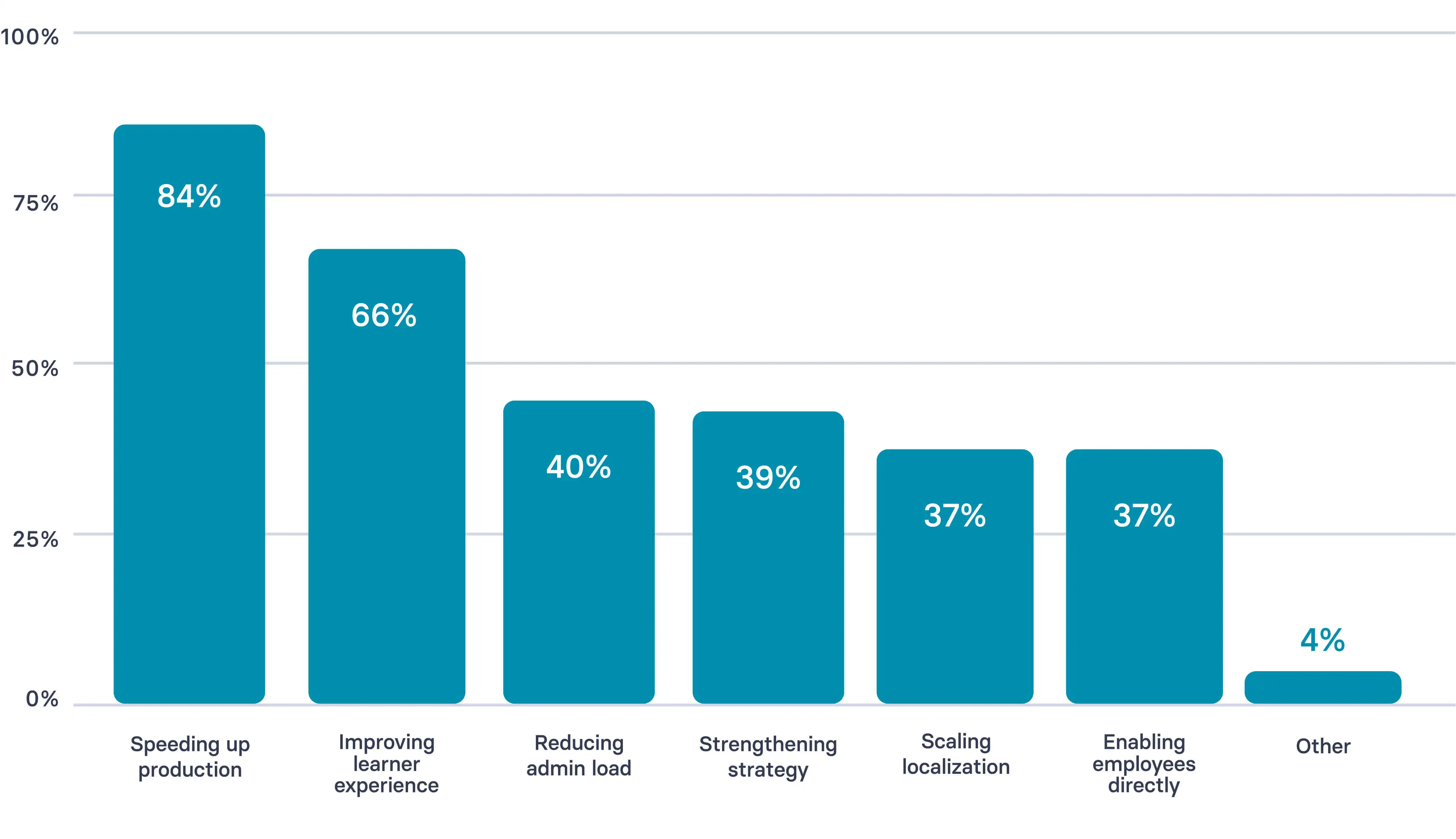

87% of L&D professionals are already using AI according to our 2026 report. How they're using it, however, varies greatly. Some organizations are still experimenting, while a handful are systematically integrating it into their workflows.

Here are four trends I'm seeing in how L&D teams are using AI.

1. Speeding up content production

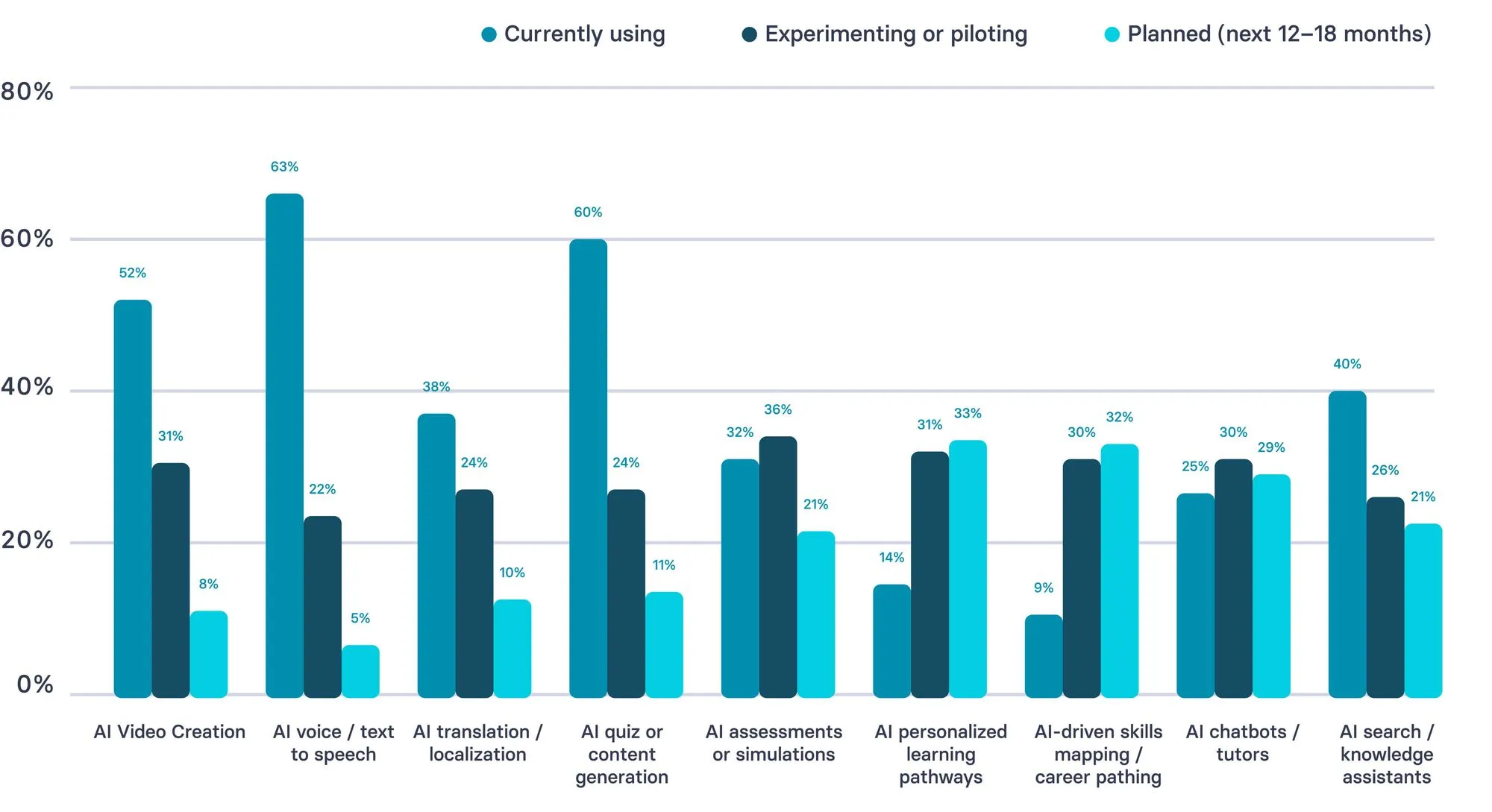

84% of L&D teams are using AI to speed up content production. That includes tasks like drafting content and quizzes (60%), generating narration (63%), creating videos (52%), and localizing materials (38%). Meanwhile, only 39% report using AI to strengthen their strategy.

Even among early adopters, the gap between using AI for speed and using it for impact is significant.

It's worth noting that our survey included 421 respondents, many of whom are customers or practitioners in our networks. As an AI company, adoption rates in our sample likely skew higher. Yet the pattern still holds.

So beyond speed, the real shift is moving from content production to driving measurable impact. That shows up in how L&D teams are designing, delivering, and evaluating learning experiences.

2. Designing for adaptivity

Right now, you likely have an LMS or LXP that includes static learning paths, a fixed set of milestones like courses and assessments to complete. These fixed programs are necessary. They establish baselines for cohorts and drive consistency.

The good news is these fixed programs can serve as the foundation for adaptive learning paths. AI-native learning platforms are able to customize existing paths and transform them into dynamic experiences based on the learner's role or prior experience. That means you no longer have to choose between scalability and personalization when designing learning experiences.

That's likely why 33% of L&D teams are planning to implement personalized learning pathways in the next 12 to 18 months.

3. Delivering training in the flow of work

Josh Bersin coined "learning in the flow of work" in 2018 to describe employees getting the information they need, when they need it, without switching contexts. For that to work at scale, training has to be modular. 40% of teams are already using AI search and knowledge assistants to deliver on this.

With AI, we're closer than ever to closing that gap. Consider a nurse preparing to perform a procedure they haven't done recently. Before entering the room, they can search in natural language, "how do I do this procedure," and get the right guidance immediately from their organization's vetted knowledge base.

Assets are deployed at the moment of need, with flexible delivery standards so you can track what's working and adjust what isn't.

4. Predicting skill gaps

According to LinkedIn's 2026 Talent Velocity Advantage Report, 86% of companies report needing better insights into the skills their organization needs to adapt and grow.

That's not news. We're always seeking ways to better anticipate the demands of the industry and where it will go. That means we not only need to measure our workforce, but also get better at prediction.

What that requires is tooling that is excellent at pattern recognition. We're not always going to get this right, but what we can do is use AI tools to create pattern-based predictions grounded in historical data. That's what it is good at: processing massive data sets and training on them to anticipate subtle correlations, without the bias we bring.

The question is where to start. The use cases below are the ones where L&D teams are seeing the most traction.

6 ways to implement AI in your L&D strategy

It's tempting to just start experimenting with AI tools, and if you haven't already, there are plenty of examples of how your peers are already using AI below.

But before you start experimenting, I'd challenge you to approach it more strategically.

One of the most common mistakes I see is teams starting their AI implementation with tools. I get it, tools are shiny and exciting. But without a clear connection to performance goals, that experimentation rarely translates into meaningful impact.

Instead, start by looking at your L&D roadmap. Identify one area where you know it's time for a reset, whether in how you design or deliver learning, or something else entirely. Focus on defining what good looks like for that experience. Then document where AI will show up in the workflow, who will own the quality of the AI output, and how you'll know if it's improving performance.

Here are the use cases I think are worth trying out.

1. Content generation

It's performance review season and the People team only changed the rating scale descriptions, but that touches every single training asset. With an LLM, your IDs can update the guidance across facilitator guides, one-pagers, and knowledge hubs in a fraction of the time.

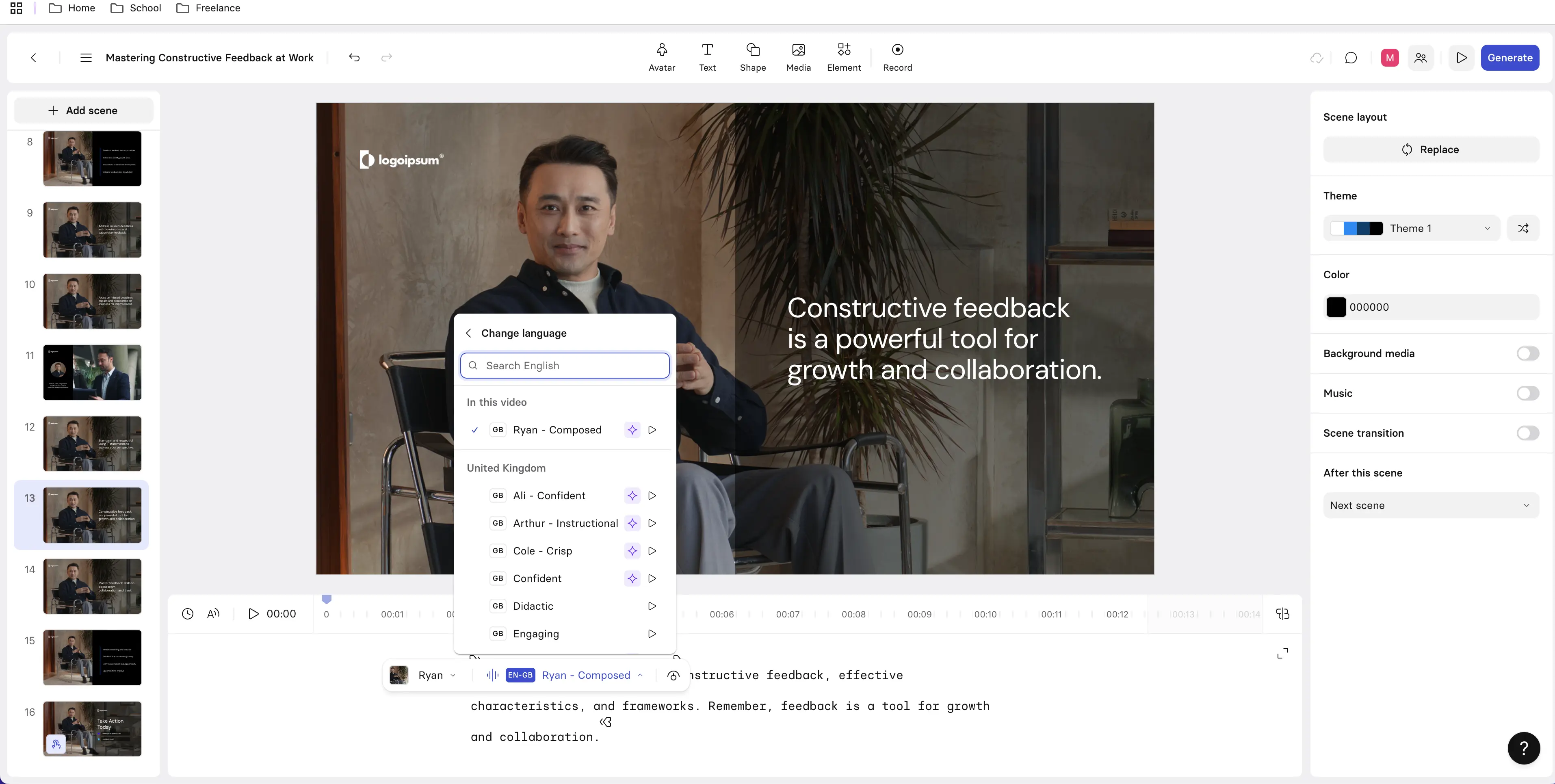

2. Localization

You need to develop technical training materials for operational staff across Latin America. That means turning regulatory content into engaging learning, and doing so in English, Spanish, and Portuguese to start. With an AI video platform, you can produce and localize training across all three languages, have a local team member verify the quality, and when the regulation changes next year, update the source once and republish across all variants.

That's how LATAM Airlines' support training team approached training their distributed employee population of 37,000, cutting production time by 83% and reaching 16,000+ learners across three languages.

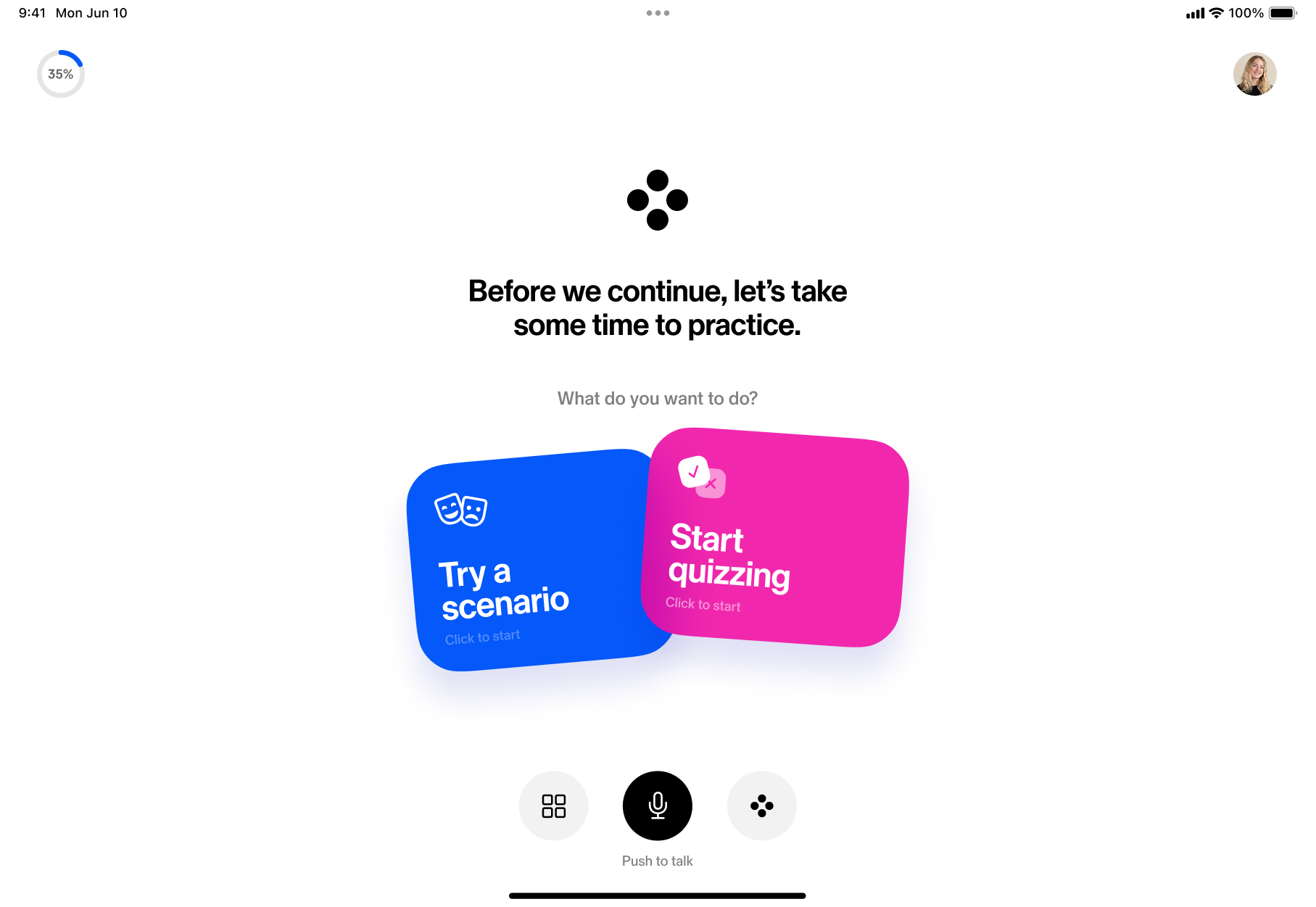

3. Scenario-based role play

Your call center data shows agents consistently struggle with de-escalating frustrated customers, but pulling them off the floor for live role play isn't realistic. With AI simulations built from your three most common escalation patterns, agents practice on their own schedule, get feedback on their responses, and arrive at their next shift better prepared.

4. Just-in-time support

A newly promoted manager is about to have their first one-on-one with a direct report going on parental leave and has no idea what to do. They ask their AI assistant, a training resource surfaces immediately covering key contacts, next steps, and tips on how to be supportive.

5. Personalized learning paths

Two new software engineers join your company with the same experience level, but one comes from outside your industry and needs more context to perform at the same level. Based on their pre-hire assessments, the platform automatically assigns additional industry context modules to one while letting the other skip ahead, and both arrive at their first team sprint with the same foundational knowledge.

6. Skills mapping and gap analysis

Your organization is planning to expand into a new region in 18 months, so your team uploads the business strategy deck and cross-references it with your current skills data. The output tells you which capabilities your workforce already has, which are missing, and which roles are most at risk, giving you enough lead time to build development pathways before the expansion begins.

What are the benefits and challenges of using AI in L&D?

If you try any of the use cases I shared, you'll probably notice some anecdotal benefits, like saving your team time. But if you're looking to make the business case for embedding AI in your L&D strategy, especially if that involves acquiring new tools, you'll want to keep a few important considerations in mind.

1. Capacity

Benefits: Across the board, the most immediate gain L&D leaders report is speed. 88% of teams report saving time on content creation. Faster production means content can be delivered more closely to the moment of need. It keeps up with the business.

Challenges: Producing content more quickly doesn't necessarily translate to better outcomes. We're producing more content, but not following through to understand whether any of it is changing behavior. I've written about this pattern as readiness debt. The risk for L&D leaders is that AI makes it easy to look productive without being impactful.

2. Cost

Benefits: 45% of teams are already reporting cost savings from AI. That's because they can stop outsourcing specialized tasks like graphic design or video production. Those line items add up quickly, especially every time there's a content refresh.

Challenges: AI can be expensive, especially at the enterprise level. Licensing costs and usage caps can impact the way you design and use these tools (more on that in the tools section below).

3. Governance

Benefits: Building AI governance into your workflows is an opportunity to document processes your team has probably been running informally for years: how content gets created and reviewed, how updates get approved and prioritized, how evaluation is conducted, and how localization gets managed. AI makes consistency harder to ignore, which means it also makes standardization easier to justify.

Challenges: Most enterprises are still learning how to govern AI. That means L&D teams can find themselves in a position where they don't quite know who owns what tool, or how to bring in new tooling.

4. Business impact

Benefits: 41% of L&D teams say AI is already contributing to business impact by enabling them to create content more quickly and meet business needs. It is also shortening the time between when we deliver content and when we can see signals of it working.

Challenges: Most teams haven't reached the maturity to demonstrate measurable business impact from AI in L&D. Only 19% are using AI for evaluation, compared to 65% using it for content development.

How do you measure AI's impact on training outcomes?

Performing a robust evaluation of training outcomes can feel like a herculean task some days, which is why sometimes we settle for NPS or completion rates. And its likely why 63% of L&D teams need more support assessing impact.

Once you add AI into the equation, you're adding another layer of complexity. So you need a streamlined way to assess before and after AI is introduced.

Start by documenting the current state. This requires you to have:

- One observable behavior that the training was designed to change or impact

- Two signals in the workflow: one leading indicator (something you can observe happening during or right after training) and one lagging indicator (something that shows up in performance data weeks later)

- A proxy measurement for business impact

(I know, you just rolled your eyes at proxy measurement and signals.)

Here's what I mean, using the example of a customer service training. Let's say you rolled out an eLearning course for 1,000 customer service reps globally. You wanted to help them better handle customers who were upset, so they could de-escalate the situation and find a resolution.

So you need to measure your baseline using this sentence template:

"We expected behavior X to change; we see evidence it did (or didn't); metric Y moved in the expected direction (or didn't). Here's what we'll adjust next."

So a completed example for customer service might look like:

"We expected reps to more consistently de-escalate upset customers using the three-step resolution framework. We see evidence they did: 1. managers are reporting fewer escalations to senior staff, and 2. average handle time on difficult calls has dropped. Our proxy metric, CSAT on resolved complaints, moved in the expected direction. What we'd adjust next: reps are still inconsistent in the opening 60 seconds of a difficult call, which suggests the acknowledgment step needs reinforcement."

That's your baseline, your before AI. And most importantly, it tells you exactly where AI can help. In this case, reps need more practice in the first 60 seconds.

There's a reason that's hard to fix with a one-off course.

You turn to AI to build in more practice and feedback loops. You identify a select group of the 1,000 reps who need more practice and try out an AI coaching tool. You build a few scenarios focused specifically on the first 60 seconds of a difficult call. After four weeks, the pilot group shows measurably more consistent opening acknowledgments in manager observations, and their escalation rate drops further than the control group.

Rather than rolling it out to everyone, you make the tool available on-demand for reps whose call data flags a pattern of struggling in that opening moment. Over the next three months, you track whether reps who used the AI scenarios show improvement in first-contact resolution rates (the metric your customer service leadership cares about).

If they show improvement, that's your business case for investing in AI. You've turned behavioral signals into patterns, and patterns into a story the business recognizes.

That's the business case AI helps you make.

What are the best AI tools for training and development?

Figuring out the best AI tools for L&D is like finding the perfect pair of jeans. You have to try on a lot of pairs to find the right fit, at the right price, that works with what you already own.

While the metaphor may be a bit simplistic, here's what I mean. Every time you add to your tech stack, you do so with a budget and organizational context. That means understanding what tools you already have (whether L&D owns them or not) and how they do or don't work together. Security and accuracy concerns, integration challenges, and legal restrictions can also slow down AI procurements.

That's why I want to start with a tool you likely already have access to, but probably don't control: the LLM.

1. Large Language Models (LLMs)

I think of LLMs as the workhorses for L&D teams. They're where we're seeing the most lift, whether that is time and cost savings from faster content production, or capacity freed up for more strategic work.

An LLM can conduct research, summarize and analyze datasets and trends, and draft everything from learning objectives to assessments and surveys.

Most enterprise teams already have or are piloting an LLM. The best part: when an LLM is vetted by your organization, it can be configured to integrate with your existing knowledge sources. So whether it's Claude, ChatGPT, Perplexity, Gemini, or something else, make it work for you. The best LLM is the one that amplifies your institutional knowledge and reduces friction in your workflow.

That said, if you're in the exploratory phase or on an RFP committee for an LLM, I recommend diving into Dr. Philippa Hardman's breakdown of the AI model stack for instructional design.

2. Learning Management Systems and Learning Experience Platforms (LMS/LXP)

Whereas an LMS has traditionally been where learning is delivered, tracked, and measured, an LXP has focused more on the learning experience itself. Today, the lines between the two are blurring.

There are few AI-native LMS or LXP platforms. More often, you're seeing AI added on whether for admin tasks like enrollments and reminders, or analytics like dashboards and reporting. Where the greatest potential lies is in personalized learning experiences: the ability for employees to use the platform the way they would an LLM — asking questions, much like you would a tutor, but in a controlled environment where the information is carefully vetted.

Few platforms are able to deliver this experience, which is why I recommend Sana.

Sana supports learning in the flow of work with features like its AI tutor. Employees can search for answers in natural language, get just-in-time guidance without leaving the platform, and receive personalized content recommendations.

Works best for: Teams looking to replace a legacy system or bring in a new platform, and teams moving from static content libraries to dynamic and personalized learning.

Falls short when: Teams have a robust SCORM content library they're looking to maintain within one platform.

3. eLearning authoring tools

eLearning authoring tools are what enable you to build the content hosted in your LMS or LXP. Some platforms have an internal authoring tool, which allows you to build courses or programs with components like knowledge checks or interactive scenarios.

Many eLearning authoring tools have AI added on, and there's a structural reason for that. These tools were designed to output content in SCORM packages. SCORM is a static standard, meaning that AI cannot modify the contents. This limits your ability to adapt content to individual learners or capture data beyond completion rates and assessment scores. That's why you see platforms like Sana taking an AI-native authoring approach.

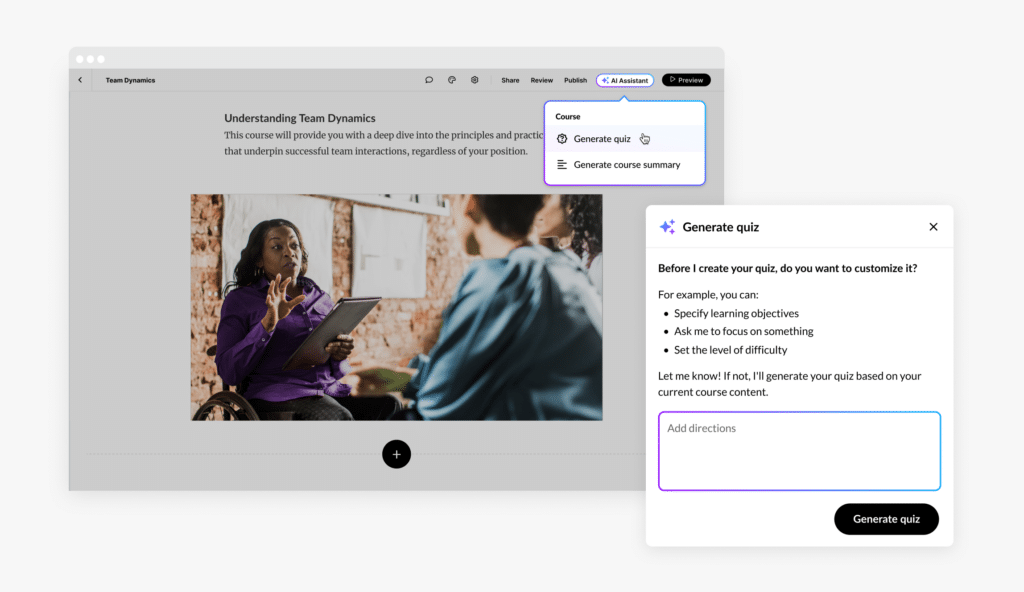

That said, one of the primary ways AI is working with eLearning authoring tools is by transforming how content gets built. Whereas before, an instructional designer would go into these tools and craft a course by selecting each component manually, now they can use natural language and existing content to get a significant head start. That's the case with Articulate 360's AI Assistant.

Articulate 360 is a suite of eLearning tools, including Rise, a browser-based authoring tool. With their AI Assistant, teams can build courses using natural language and existing materials like slide decks or PDFs. It drafts content and generates assessments, imagery, and narration.

Works best for: Teams already using Articulate who want to accelerate content production without changing their workflow, rapid course builds from existing source material, and organizations that need SCORM or xAPI-compliant output for an existing LMS.

Falls short when: You have a limited budget. The AI Assistant requires a separate subscription.

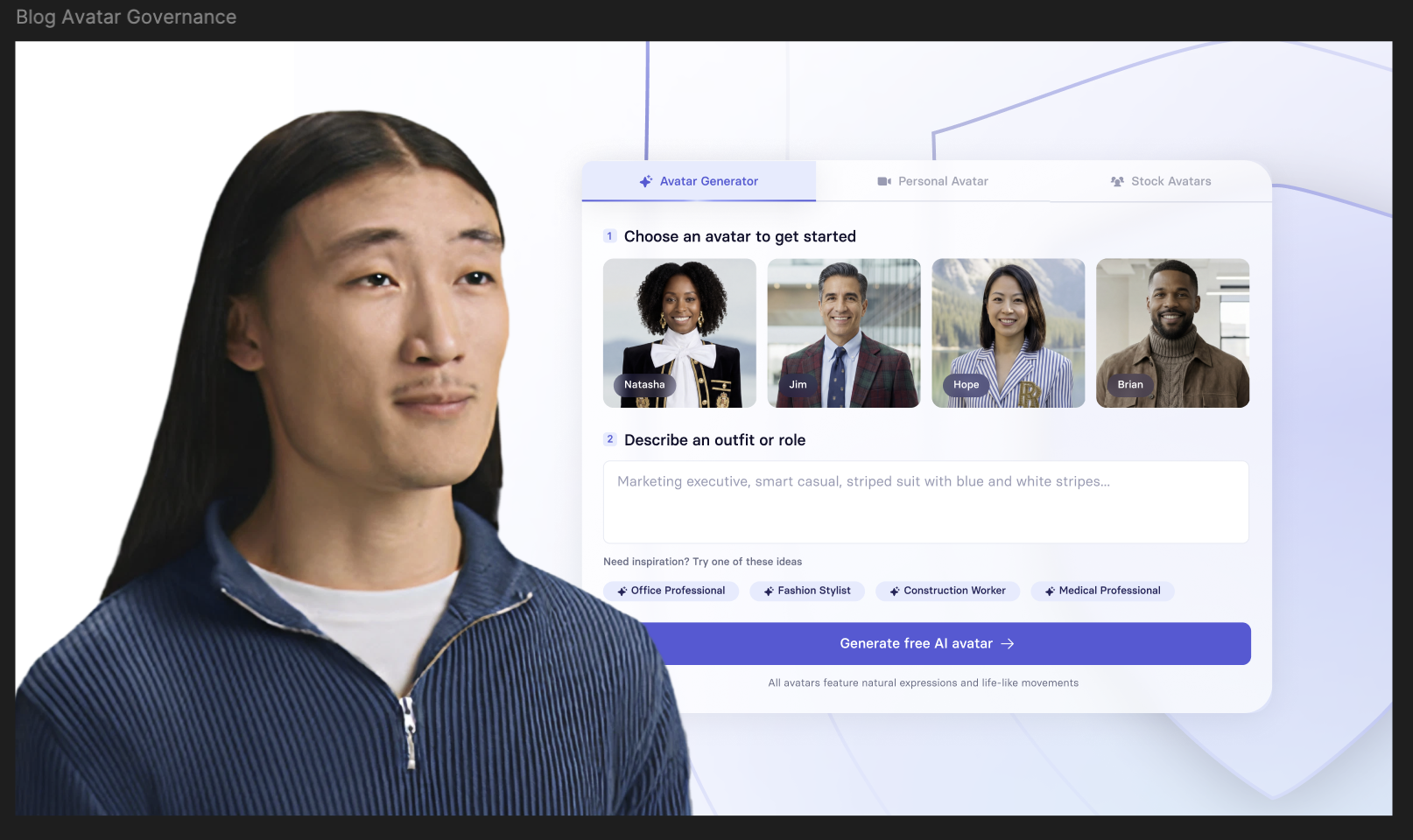

4. Video platforms

L&D teams are increasingly investing in training solutions that offer personalized learning at scale, like video training. AI video platforms transform what was a time and cost-intensive production into a lightweight workflow that any instructional designer can pick up quickly.

With AI-powered platforms like Synthesia, instructional designers can direct the video creation process. They can start with a prompt, a script, or an existing document like a transcript or SOP, and produce a refined training video that can be published or distributed in the flow of work in over 160 languages.

Synthesia turns written training content into presenter-led videos without traditional production workflows. It's especially useful once objectives and structure are defined, giving teams a fast way to produce onboarding, internal communications, and frequently updated training at scale.

Want to see what an AI-assisted video creation workflow looks like? Watch this quick overview.

Works best for: Onboarding, training that needs frequent updates or localization, and SME-driven video creation.

Falls short when: Teams need complex scenario simulations or highly customized visual storytelling.

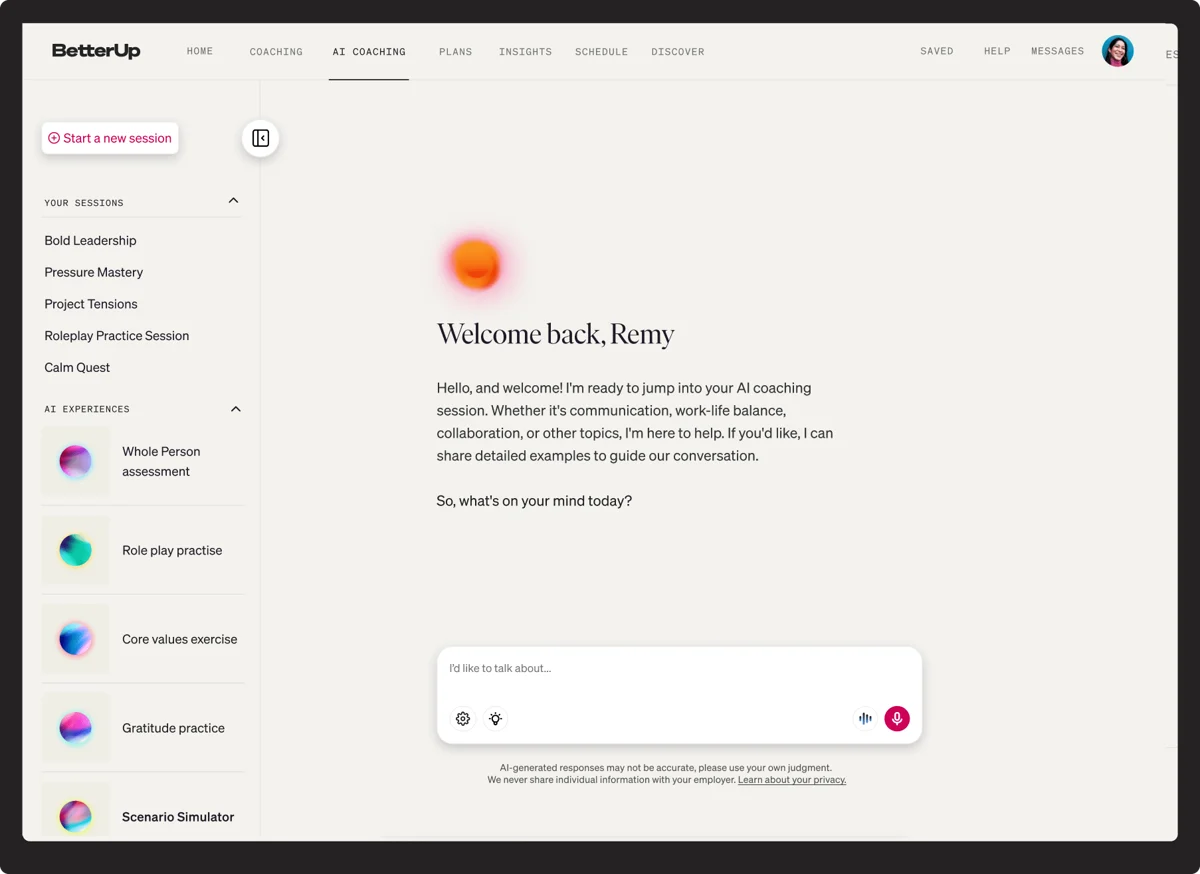

5. Coaching

When I first started my L&D career, coaching was reserved for senior or "high potential" employees. Good coaches are expensive, rightfully so, and few L&D teams have the budget to make even affordable coaching accessible to their broader employee population.

In the past decade, platforms like BetterUp have sought to change this by connecting experienced global coaches to employees through subscription models. Now, these companies are making that experience even more affordable through AI coaching.

BetterUp Grow is an AI coaching platform built on behavioral science and informed by over five million coaching sessions. It is customizable to your organization. That means you can share your values, career frameworks, and business priorities so conversations stay aligned with how your organization defines growth.

Works best for: Scaling practice and feedback reinforcement after development programs like manager training, and offering just-in-time support around critical moments like performance reviews.

Falls short when: Teams are looking for what makes great coaches worth the investment — the human connection, long-term developmental coaching focused on leadership, career growth, or the emotional and social dimensions of performance.

Beyond the core stack

In addition to the categories above, there's another set of tools worth considering when assessing your tech stack. AI notetakers, meeting transcription tools, knowledge management platforms, and enterprise search all fall into this bucket. These tools are often owned by IT or operations, but they're valuable data sources that can help bring learning closer to the flow of work.

Your team will likely also use a handful of lighter-weight tools, expensed on a credit card or through individual subscriptions. If your team needs infographic capabilities, for example, a tool like Venngage is worth exploring.

Regardless of which solution you're considering, I recommend thinking through the questions in the checklist below.

What does the future of AI in L&D look like?

If you've made it this far, congratulations, that was quite a trek. Before you go, here are a few nuggets from our survey worth noodling on.

While I'm not in the prediction business, I do think the data is directionally interesting. Only 47% of respondents said they think the LMS will be the backbone of their stack in three years. I understand the sentiment, but I'm skeptical of the timeline.

Too many organizations rely on their LMS for compliance tracking and content libraries that the change management required would be too great a lift for most. Sure, more agile organizations will get rid of it, or maybe never acquire one in the first place. But I don't see that changing any time soon. Remember when everyone said SCORM would die? Still waiting on that one.

That said, one insight I find genuinely valuable is that 58% of respondents believe AI gives L&D more strategic influence.

Personally, I think that's because it gives us the capability we've always needed but rarely been resourced for: to truly demonstrate the power of workplace development. To be a real partner to the business. The kind that unlocks talent and maybe even helps place the next big bet.

Amy Vidor, PhD is a Learning & Development Evangelist at Synthesia, where she researches learning trends and helps organizations apply AI at scale. With 15 years of experience, she has advised companies, governments, and universities on skills.

Frequently asked questions

How is AI being used in learning and development today?

AI adoption in L&D is broad but uneven. According to our 2026 AI in L&D Report, 87% of L&D teams are using AI in some form, but most of that use is concentrated in content production. Teams are furthest along in design and development: drafting scripts, generating quizzes, creating videos, and localizing content across languages.

Where adoption is weakest is where the impact potential is highest. Only 19% of L&D teams are using AI for evaluation, and use cases like personalized learning paths, skills mapping, and predictive analytics are still in early piloting stages for most organizations. The gap between how fast teams are producing content and how consistently they are measuring whether it works is the defining challenge for L&D right now.

What are the best AI tools for L&D teams?

It depends on what you're trying to do. For AI-native learning management and personalized delivery, Sana is worth evaluating. For AI-assisted course authoring within a tool your team likely already knows, Articulate 360's AI Assistant accelerates content production without changing your workflow. For training video creation and localization at scale, Synthesia. For AI coaching and behavior change at the manager and employee level, BetterUp Grow. For infographics and visual learning content, Venngage.

Before you evaluate any of these tools, assess what other tools you have access to inyourorganization. If your organization has an LLM deployed, explore what it can do for L&D workflows before adding something new. If your LMS has AI capabilities you haven't fully used, start there. The best tool is the one that fits your workflow and integrates with your existing stack.

How do you measure AI's impact on training outcomes and prove ROI to leadership?

Most L&D teams measure AI's impact through efficiency gains first: time saved on content production, reduction in external vendor costs, faster localization turnaround. These are real and worth capturing, but they are not the argument that moves leadership. The stronger case is behavioral impact.

To get there, define the specific behavior you expect training to change, identify a signal in your existing systems that would show that behavior is shifting, and track it consistently enough to separate correlation from noise. Behavioral signals, like how consistently a manager delivers timely feedback, how a rep opens a discovery call, tend to move before lagging OKRs or KPIs do.

When you can show leadership that those signals are improving, you have a credible story about training impact. The measurement section of this post walks through a practical framework for building that case.

How do you build a business case for AI in L&D?

AI investment for its own sake rarely gets approved. What does get approved is a solution to a problem leadership already cares about. AI that reduces time to productivity for new hires, keeps compliance training current without full rebuilds, or scales onboarding consistently across regions — that's a conversation leadership is ready to have.

The strongest business cases combine three things: a specific performance problem, a clear picture of how AI changes the workflow, and a credible plan for measuring whether it worked. Starting with lower-risk, high-visibility use cases like video creation or localization lets you build a track record. That track record is what makes the case for larger investments in personalization, coaching, or analytics.

How can AI personalize learning at scale across a large organization?

Personalized learning at scale means connecting AI to data about what learners already know, what role they're in, and how they're performing on the job. In practice, that means integrating your LMS or LXP with performance data so content recommendations, sequencing, and reinforcement adjust to the individual rather than following a fixed curriculum.

Most enterprise teams are still in early stages here. Our research shows personalized learning paths skew toward piloting rather than full deployment. The teams making the most progress are starting with high-frequency roles where performance data is clearest, typically new hire onboarding and sales enablement, and expanding from there as they build confidence in the data and governance model.

What are the biggest risks of using AI in L&D, and how do L&D leaders manage them?

According to our 2026 AI in L&D Report, the most commonly cited barriers are security concerns (58%), accuracy concerns (52%), and lack of internal expertise (46%). For L&D leaders, the risks that tend to cause the most damage are quality drift, governance gaps, and measurement avoidance.

Quality drift happens when AI accelerates output without clear ownership over accuracy, tone, and instructional standards. Governance gaps emerge when tools are adopted without IT or legal involvement, particularly around learner data. And measurement avoidance, producing more content faster without building in evaluation, is the pattern most likely to undermine your business case over time. All three are manageable if governance and measurement are treated as implementation decisions from the start rather than problems to solve later.

How do you ensure data privacy and governance when rolling out AI for training?

Get IT and legal involved before you scale. The key questions to resolve are whether the tool processes or stores learner data, whether it complies with GDPR, CCPA, or other regulations relevant to your regions, and who owns the data if the vendor relationship ends.

Beyond compliance, governance means documenting what AI supports and what stays human-led before content volume makes that harder to control. Standards for accuracy, inclusivity, and brand voice should be written down and not assumed. Review and approval workflows should be in place before you scale.

Our research found that 59% of L&D practitioners are still not using learner personal data with AI, with many citing unclear approval processes as the reason. Getting ahead of that ambiguity is one of the most practical things an L&D leader can do before expanding AI use across their function.